Category Archives: JSM

A novel way to gamble on the NCAA tournament…

I saw a talk at JSM where I was introduced to a fun new (well, new to me) game to play during the NCAA tournament. First, teams are assigned a price based on their seed. This can be done in many ways, but it was set in the talk that the one seeds cost 25 cents, the two seeds cost 19 cents, all the way down to the 15 and 16 seeds which were a penny each. The goal is to choose a set of teams, that costs, in total, one dollar, that will win the most number of games in the NCAA tournament. So picking all the number one seeds, which will cost exactly one dollar, but the most wins they can earn is 19 (4 each to the final four and then one each for the two semifinals and one for the championship). So, according to the speaker, this usually won’t get you the win. First of all, this game is awesome. Once you can stop thinking about how awesome this game is, the next logical question is: How do you choose the optimal set of teams?

Douglas Noe and his student Geng Chen used an evolutionary algorithm to optimize the selection of teams, and they used Ken Pomeroy’s rankings as a guide to the probability that one team will beat another team in the tournament. Now, I don’t think I ever heard of evolutionary algorithms, and, if I have, I’ve totally forgotten about them. But they are wicked cool. Here is the wikipedia page for evolutionary algorithms, and it’s worth checking out. Does anyone have any suggestions as to a good resource for an introduction to evolutionary algorithms?

Cheers.

JSM 2011 – Statistical Disclosure Control via Suppression: Some thoughts

I attended a session at JSM (in Miami. In August….) where the big topic was statistical disclosure control (SDC) via suppression in linked tables. As far as I can tell suppression is the most popular and widely used method of SDC for tables, but it seems that the application of this procedure is extremely ad hoc. Data disseminating organizations all have different rules for when they feel that a cell count or total is too small to be released, but it seems that this is all done by educated guessing as to what cell values are unsafe. I wonder if there are any formal guarantees that can be provided to individuals or organizations whose data is being disseminated in tables and protected via suppression? Certainly tables with sensitive values cannot simply be released to the public and something needs to be done to address the problem. Cell suppression is something and it certainly offers something in the way of protection (what is that something?). But I don’t know that cell suppression is more about an appearance of privacy (which is still very important), rather than actually providing privacy.

Of course, I’d love someone to respond to me and tell me that I am dead wrong and put my mind at ease.

Cheers.

JSM 2011 review / Miami in August!

I don’t know how many of you have ever been to Miami Beach in August, but it’s not exactly…..comfortable. But that’s where my quest for knowledge took me in the first week of August to the Joint Statistical Meetings (JSM) 2011.

I attended a really interesting session “Statistical Analyses of Judging in Athletic Competitions: The Role of Human Nature“. I missed the first talk about racial bias in Major League Baseball (MLB) umpires, but I caught the last three, which were all very interesting. John Emerson, who organized the session, presented “Statistical Sleuthing by Leveraging Human Nature: A Study of Olympic Figure Skating”. Ryan Rodenburg (Blog: Sports Law Analytics) presented his paper “Perception ≠ Reality: Analyzing Specific Allegations of NBA Referee Bias”. His approach was rather interesting. Rather than try to look for overall biases in NBA referees, he attempted to validate or invalidate specific allegations levelled against specific referees. His talk was followed by Kurt Rothoff who presented his paper “Bias in Sequential Order Judging: Primacy, Recency, Sequential Bias, and Difficulty Bias”, which focused on judging in gymnastics. One of the key findings of this work was, from his abstract, “Contestants who attempt higher difficulty increase their execution score, even when difficulty and execution scores are judged separately.” This makes me a little bit nervous because the better athletes are attempting the more difficult routines and, since they are better athletes, may receive higher execution scores because they are better athletes to begin with. Is there anyone out there who has ever been a competitive gymnast who has any thoughts on this?

Cheers!

JSM (in the wild)

I’ve been in Vancouver the past week for the Joint Statistical Meetings (JSM) . Here is a collection of my thoughts and comments from the few days I was at the conference.

On Monday I went to the section on Survey Research Methods and saw Meena Khare, of the National Center for Health Statistics (NCHS) and Laura Zayatz of the United States Census give talks. They both spoke about measures that their institutions go through to release data to the public. The NCHS looks for uncommon combinations of variables that could be used to possible de-identify the data. Both organizations first remove obvious identifiers and then go on to make the released data more private. For instance, if the number of observations with a unique combination of variables in a data set is n and the number of observations with the unique combination is N, they would consider any combination of variables where n/N<.33 at risk for disclosure. At the U.S. Census, they have used something called data swapping to protect public release data sets in the last two Censuses (2000 and 2010). Along with data swapping, the Census will also be using partially synthetic data to maintain confidentiality to protect individual privacy in public release data in 2010.

Several things strike me about this.

-The methods that these organizations use to protect confidentiality are certainly going to increase privacy compared to a release of raw data, however, there doesn’t seem to be any way to know that what is being done is providing “enough” privacy.

-It’s clear that many different government organizations have issues which require some use of disclosure limiting techniques, however, it seems that each organization is creating its own rules and there is limited discussion going on between organizations to conceive of a standard policy for data sharing.

-There doesn’t actually seem to be any definition of what is considered a disclosure. For instance, if government data is released and I discover through some technique that someone definitely has HIV, then clearly a disclosure has taken place. However, if I use the same data to discover that someone definitely does not have HIV, a disclosure has still taken place, but the consequence is much less damaging. Furthermore, consider a situation where prior the the data release, I know a particular individual has a 50 percent chance of having HIV. After the data release, I can infer that there is a 99 percent chance that they have HIV. Clearly, I would consider this a disclosure. But what if the probabilities shift from only 50 percent (pre-data release) to 75 percent (post-data release) or 50 percent to 55 percent. At what point is “too much” information being released. It seems as if this issue receives less attention than is warranted.

-Finally, I believe that the ultimate solution to the disclosure problem is a careful combination of policy and disclosure limiting techniques. Policy issues include defining how much privacy must be maintained by a given technique, as well as, legal consequences for knowingly disclosing private information. Statistics has an obligation to provide increasingly improving statistical disclosure techniques along with metrics for measuring the privacy of a given technique.

Later on Monday, I saw the tail end of the talk by David Purdy titled “Statisticians: 3, Computer Scientists: 35”. The abstract for the talk was:

“John Tukey and Leo Breiman warned us that a day would come when statistics would need to focus more on computing, or risk losing good students to computer science. The Netflix Prize provides many examples of how our field needs to do more.

In the top 2 teams, participants with a computer science background vastly outnumbered those with a statistics background. There are a number of lessons that the field of statistics can learn from the fact that undergraduates in CS were well equipped to compete, while statisticians at all levels were not well prepared to implement advanced algorithms.

In this talk, I will address methodological issues arising with such a large, sparse dataset, how it demands serious computational talents, and where there is ample room for statistics to make contributions.”

I only saw the end of the talk, but I feel like I got the point. He notes how programs in statistics need to expose students to more aspects of computing. One quote from his talk that particularly shocked me was from a prominent statistician referring to the Netflix prize data set: (I’m paraphrasing) “I can’t do anything with the data, there is just too much of it.” (If anyone knows the actual quote, I would love to have it). Too little data may often be a problem, but too much data should be a blessing, rather than a curse.

When he is talking about computing he is referring to implementing complex algorithms to analyze the data, however, in my experience I have seen people struggle with simply managing data of this size. This is a simple problem to deal with, but, in my experience, I have both had this happen to myself and seen it happen to others. When I was in grad school working towards my master’s degree right out of undergraduate, we were given a problem in a consulting class with a “large” (several thousand observations) amount of data (well, “large” to someone with no experience managing data.) We (my group) knew exactly what we wanted to do with the data, but we are unable to manage the data in a way that would make it useful for analysis. So we did nothing. The moral of the story here is that, while we were taught well the techniques which were useful for analyzing the data, we were never taught and had never learned any useful data manipulation techniques, rendering our statistical educations useless. It was not until I got my first job that I learned, out of necessity, data management techniques including SAS data steps, SAS macros, and SQL.

When I returned to school to pursue a Ph. D., I saw many students with no work experience struggling through all of the same problems that I had with managing data. The same old “I know exactly what I want to do, but I can’t organize the data.” Often times in grad classes, books or teachers will describe a data set as “large” when it has several hundred or several thousand observations. This seems inadequate preparation for working in industry, as my first jobs often dealt with data sets with millions of observations and, later, a summer consulting project involved billions of observations.

Currently, there are no required computing or data management classes in my program for earning a Ph. D. in statistics. I think there should be a required class in every statistics program covering data management issues and, at least, a solid introduction to programming.

After, David Purdy’s talk, Chris Volinksy (Follow on Twitter) and he took questions. One interesting question that came up was about a second Netflix prize. However, Chris noted that this had to be cancelled because of privacy concerns. I’ve written before (or at least posted on Twitter) about some researchers who claim to have de-anonymized the data from the Netflix prize and, as a result, a lawsuit has been filed. (Netflix’s Impending (But Still Avoidable) Multi-Million Dollar Privacy Blunder) Whether you agree with canceling the prize over privacy concerns or not, it is clear that disclosure limitation is currently a big issue that certainly cannot be ignored.

On Tuesday, I went to one of the sports research sections and saw two talks before I left to go see a talk about partially synthetic data in longitudinal data. The first speaker, Shane Jensen, spoke about evaluating fielders abilities in baseball using a method he proposed called Spatial Aggregate Fielding Evaluation (SAFE). The previous link explains how their evaluation of players works and gives measures of performance for each player. Probably, the most shocking result of his work is that, averaged over 2002-2008, SAFE evaluated Derek Jeter as the worst shortstop who met the minimum number of balls in play (BIP). Alternatively, SAFE rates Alex Rodriguez as the second best shortstop over this same period, even though he now plays third to allow Jeter to play SS.

The next speaker was Ben Baumer, statistical analyst for the Mets (and native of the 413 area code). He spoke about his paper, “Using Simulation to Estimate the Impact of Baserunning Ability in Baseball“. One of the interesting things I took away from his talk is that he claims that players’ speed used to break up a double play is one of the important aspects of base running, but this is often largely or completely ignored as an evaluation tool of a players base running ability.

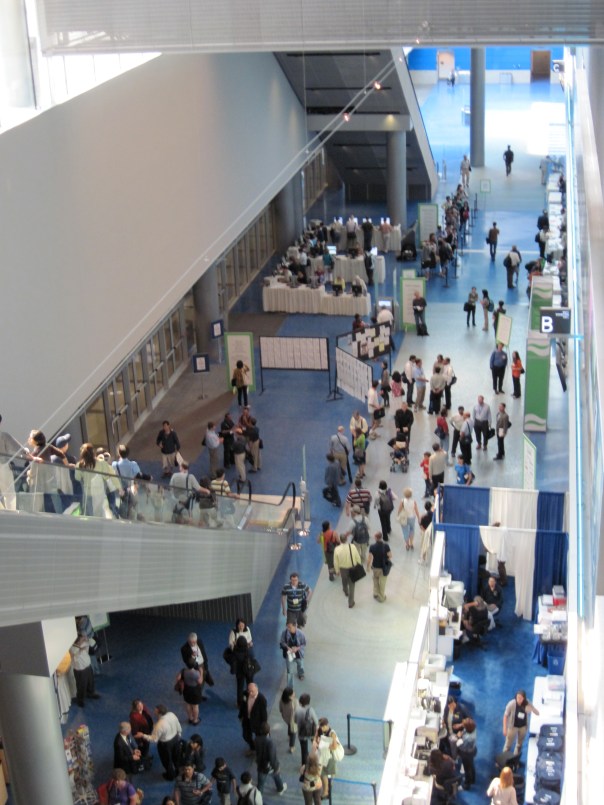

Before I end, I’d like to say thanks to all the speakers that I saw speak this past week and, finally, I’ll leave you with a view of Vancouver from the convention center.

Cheers.