Category Archives: Politics

One last post on field goals and presidential politics

I think I’ve finally finished these. Thanks to everyone for the good suggestions. These are based on this post yesterday.

Here are both Trump and Biden together. The first is Trump, the second Biden. It’s not quite symmetric, but it’s close. For instance, Biden winning Minnesota is about a 37 yard field goal. Trump winning Minnesota is a 57 yard field goal. It would be kind of cool if it worked in both directions, but it doesn’t quite work, unfortunately.

If you look at a bunch of states that Trump needs to win, like Florida, Michigan, Pennsylvania, Wisconsin, etc. they are all in the 60 (like Florida) to 70 (like Michigan) yard range. So for Trump to win he needs to him a few 60 yard field goals in a row (granted they are correlated, so it’s a little bit easier than that). But it’s not easy to hit a 60 yard field goal! But it does happen!

Cheers.

Yunel Escobar, Homosexual Slurs, and the 2008 Presidential Election

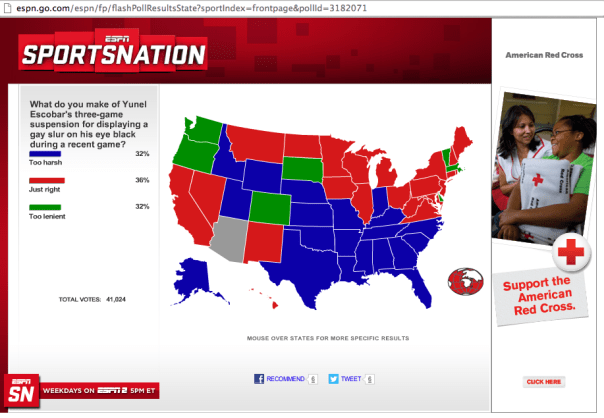

I just came across this poll question on ESPN about people’s opinions regarding the Yunel Escobar’s suspension, and I couldn’t help but notice that this map (below) look very similar to the 2008 presidential election map. I suppose you could view the map below as essentially serving as a state by state snap shot of how residents (well residents who read ESPN.com and vote in their polls) feel about homosexual slurs. Take a look at this map:

Now take a look at this map that shows which states voted for McCain and Obama in the 2008 presidential election:

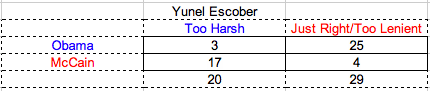

The similarities are striking. The states that judge the punishment to be too harsh, the blue states in ESPN’s map, align very strongly with red states in the presidential map. Similarly, the green (Too lenient) and red (Just right) states align very closely with the blue states in the presidential election map. All six of the green states voted for Obama in the last election with the exception of South Dakota. Of the red states in the ESPN map only Montana, North Dakota West Virginia went for McCain in 2008. Likewise, of the blue states in the ESPN map only Indiana, North Carolina, and Florida went for Obama. Finally, here is a two by two table with a break down of the relationship between Yunel Escobar opinions and presidential states. (The p-value for the fisher test of independence between rows and columns is 8.213e-07). So, it seems there is a significant association between how people feel about Yunel Escobar’s suspension for using a gay slur and how that state voted in the 2008 presidential election.

Cheers.

Brown/Warren, Polls, Sampling, and Confidence Intervals

I was reading an article on Huffington Post about the Massachusetts Senate election, and they link to an article that cites a poll conducted by Western New England University’s (nee College) Polling Institute. I was interested in this because I grew up near the college, and I have never heard of this polling institute before. So, I decided to take a look. I was reading their survey and got to the end where it had a description of the methodology. I was casually reading it and some things jumped out at me. I have one question and one comment.

First the question:

They state in their methodology:

The Polling Institute dialed household telephone numbers, known as “landline numbers,” and cell phone numbers for the survey. In order to draw a representative sample from the landline numbers, interviewers first asked for the youngest male age 18 or older who was home at the time of the call, and if no adult male was present, the youngest female age 18 or older who was at home at the time of the call.

This seems to me like it will bias the sample as they are much more likely to be taking a sample of men than women. They do note that “The landline and cell phone data were combined and weighted to reflect the adult population of Massachusetts by gender, race, age, and county of residence using U.S. Census estimates for Massachusetts”, but then why ask for the youngest male over 18? Is this a valid method? It seems that in the final results they have a nearly even split of men vs women, but it seems to me that using this method your going to get a sample that is biased toward younger, male voters. Can someone explain to me why this is or is not valid? I really don’t know, but it seems odd to me.

And now the comment:

In the next paragraph, they state:

All surveys are subject to sampling error, which is the expected probable difference between interviewing everyone in a population versus a scientific sampling drawn from that population. The sampling error for a sample of 444 likely voters is +/- 4.6 percent at a 95 percent confidence interval. Thus if 55 percent of likely voters said they approved of the job that Scott Brown is doing as U.S. Senator, one would be 95 percent sure that the true figure would be between 50.4 percent and 59.6 percent (55 percent +/- 4.6 percent) had all Massachusetts voters been interviewed, rather than just a sample. The margin of sampling error for the sample of 545 registered voters is +/- 4.2 percent at a 95 percent confidence interval. Sampling error increases as the sample size decreases, so statements based on various population subgroups are subject to more error than are statements based on the total sample. Sampling error does not take into account other sources of variation inherent in public opinion studies, such as non-response, question wording, or context effects.

This is simply an incorrect explanation of a confidence interval (which I’ve actually written about before a long time ago when I first started this blog). In frequentist statistics there is this true value that you are trying to estimate that is assumed to be fixed and also unknown (hence you are trying to estimate it). A sample of data is then collected to try to estimate the unknown quantity and a confidence interval can be constructed. However, the probability that the true figure is in this confidence interval is either 0 or 1 since there is nothing stochastic about the true value that is being estimated. This interpretation will lose you points on a statistics test. So, I don’t know what they mean by being “95% sure” here. The true interpretation is that 95% of similarly constructed intervals will contain the true value. This is a different statement than being 95% sure the true value is between the upper and lower limits of the one confidence interval you have constructed from your one sample. Imagine that you conducted this survey with exactly the same N many times. Each time you will come up with a different estimate of the true figure and a different confidence interval. If you examined all of theses confidence intervals together, 95% of them would contain the true value of the parameter that is being estimated. This is a pretty common misinterpretation of the meaning of a confidence interval and it took me quite a long time to understand the difference, but what concerns me here is that this isn’t an intro stats course, it’s a polling institute.

Cheers.

Presidential Candidates, Search Engine Auto-Complete, and Word Clouds: Bicycles, Unicorns, and American Presidential Politics

Finally, I’ve managed to post something that’s not about @BillBarnwell‘s flawed “study” titled “Mere Mortals” (Here’s why he’s wrong. Here is what happens when I apply his logic to something else….you get non-sense). Anyway, here is an update to the presidential candidates search engine auto-complete word clouds (The original post and description of how the data is collected and processed is here).

According to search engines Obama is a gay, socialist/communist, muslim terrorist version of the Antichrist (or possible, not even living thing, but a bicycle), and Romney is an idiot, douche bag, ass hole, mormon unicorn that lies.

Cheers.

Idiots, Liars, and Unicorns: Presidential Politics and Search Engines

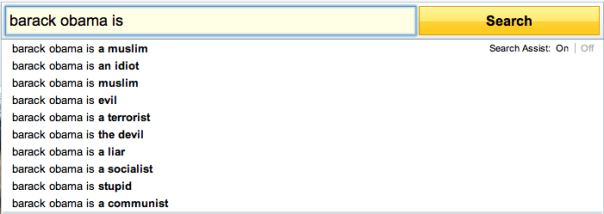

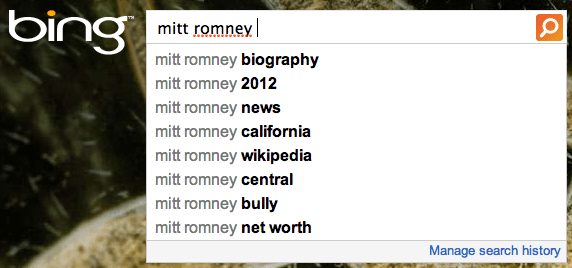

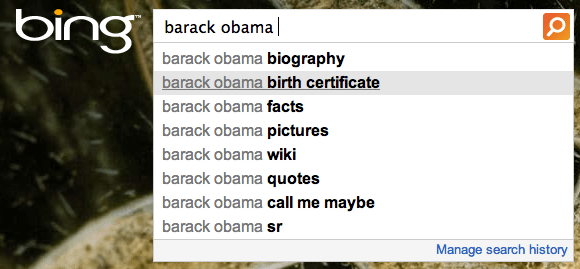

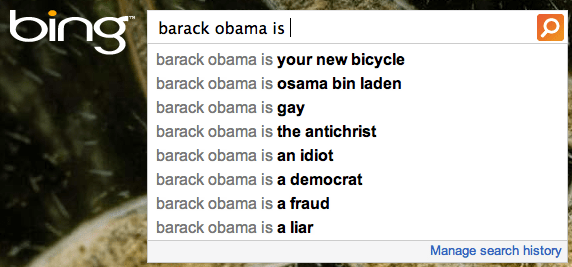

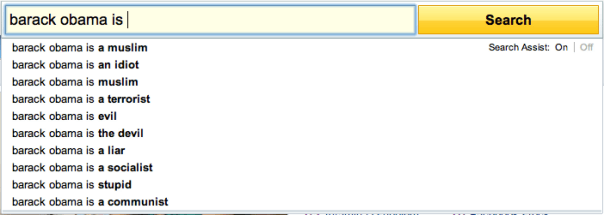

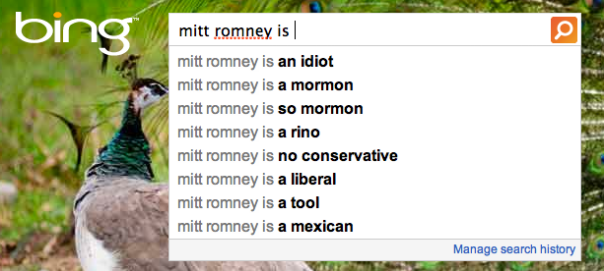

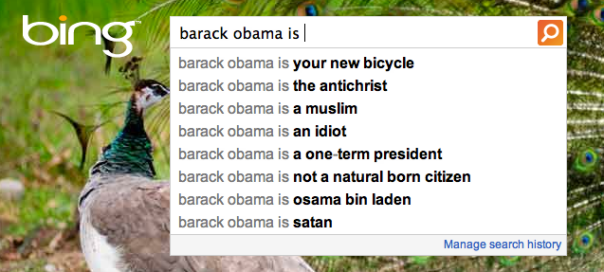

In the past I’ve posted search engine auto-completes for some of the presidential candidates. For instance, here are Romney and Obama’s results from 5/30/2012, here are Romney and Obama’s results from 4/16/2012, and here are the republican primary candidates from 12/29/2011. Below you will find the auto-completes for the two presidential candidates from 8/16/2012. I’m also including word clouds now.

I’m using three search engines (Google, Bing, and Yahoo!) and two search terms for each candidate (Mitt Romney, Mitt Romney is, Barack Obama, Barack Obama is). I’m then weighting the terms from 10 to 1 for Google and Yahoo and 8 to 1 for Bing (as they only return 8 search terms), based on the order they appear in the auto-completes. For the first two word clouds, I’m additionally weighting the search engines with Google getting weight 11.7, Bing gets 2.7, and Yahoo gets 2.4. (These numbers are approximately the number, in billions, of searches performed on each site respectively in February 2012.)

The first word cloud represents all of the words with weighting for both presidential candidates. Kind of makes you think a little bit about the political discourse in this country when some of the tops words for presidential candidates are idiot, liar, and antichrist. (For those of you new to the internet, here is the explanation for “your new bicycle”.)

This next word cloud is the same as the previous one, except it is separated by candidate. The blue and red words are Obama and Romney, respectively. If you’re wondering about the “Unicorn” on the Romney side of the word cloud, you may be interested in this facebook page. According to them, “There has never been a conclusive DNA test proving that Mitt Romney is not a unicorn. We have never seen him without his hair — hair that could be covering up a horn. No, we cannot prove it. But we cannot prove that it is not the case.” Truer words have never been spoken….

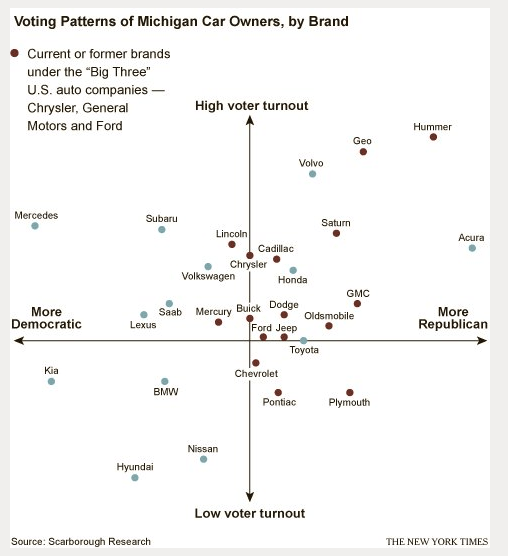

The final wordcloud of the trio breaks down the auto-complete terms by search engine. Note that, these words for this wordcloud are not weighted by search engine, but they are weighted by order within each search engine. I think it’s kind of interesting that, for Yahoo, the big words are religions: Muslim and Mormon. This makes me wonder if different search engines might predict in some way political affiliation, and, apparently, I’m not the only one who’s thought about this. Looks like a group called Engage has already looked into this and their results are summarized nicely in this graphic. According to them, Googlers tend to be more Democratic and Bingers (?) tend to be more Republican. (I don’t see Yahoo on their graphic, which I find odd.) Also, according to Alexa.com Bing users tend to be older than the average internet user, slightly more likely to have “some college” education, and slightly less likely to have a graduate degree. Google and Yahoo users tend to be very much the average internet user with the exception that they are much less likely to be over the age of 65.

Below here, you’ll find screen shots of the Google, Yahoo, and Bing auto-completes if you’re interested in the raw data that I used.

YAHOO!

BING

Cheers.

Which Nations Consume the Most Water?

Which Nations Consume the Most Water? via Chartporn.org

Cheers.

Presidential Candidate Auto-Completes – 5/30/2012

I’ve been following search engine auto-completes for the presidential candidates for a few months now. Here are Romney and Obama’s results from 4/16/2012, and here are the republican primary candidates from 12/29/2011. Below you will find the auto-completes for the two presidential candidates from 5/30/2012.